Amazing Tools To Find Duplicate Files And Remove Them In Linux

Find Duplicate Files and Remove them using amazing Tools. In this article I am going to list Amazing Tools Find Duplicate Files in Linux. Manually finding and removing duplicates it’s like a idiot job and more painful then ever. To make finding easy i have found a few amazing tools in Linux.

finds duplicate files in a given set of directories

fdupes is a command/utility to find duplicates and delete them. This package is available in EPEL Repository program is developed by Adrian Lopez.

Installing fdupes RHEL7/Centos7

# yum install fdupes

Searches the given path for duplicate files. Such files are found by comparing file sizes and MD5 signatures, followed by a byte-by-byte comparison. For testing purpose just created few files with same data in all and trying to find duplicates with in that directory.

[root@ArkItServ ~]# fdupes -Sr dupesarkit/ 24 bytes each: dupesarkit/ArkIT5.txt dupesarkit/ArkIT6.txt dupesarkit/ArkIT7.txt dupesarkit/ArkIT9.txt 0 bytes each: dupesarkit/ArkIT1.txt

fdupes command options

There are many options let’s see each of them

- -r – Search for Duplicates given directory and it’s sub-directories as well.

- -R – Find Duplicates only on given directory but not sub-directories after “:” directory

Example: fdupes apple --recurse: boy - Find under boy directory and its subdirectories but under those apple directory. fdupes dir1 --recure dir2 - finds duplicates under both dir1 and dir2

- -s – Follow Symlinked directories also

- -H – Basically Hardlinks are not considered as duplicates, but using this H option it will also finds same disk area with duplicates.

- -n – Exclude Zero length files

- -f – Exclude first file from duplicate list

- -A – Not find Duplicates from Hidden files/directories

- –1 – list each set of matches on a single line

- -S – show size of duplicate files in Bytes

- -m – Instead of listing file names display summary

Example: # fdupes -m dupesarkit/ 8 duplicate files (in 2 sets), occupying 72 bytes.

- -q –quiet – Do not show finding duplicates progress

- -d –delete – Delete all the files except mentioned files.

[root@ArkItServ ~]# fdupes -r dupesarkit/ -d [1] dupesarkit/ArkIT5.txt Set 1 of 2, preserve files [1 - 8, all]: 1 [+] dupesarkit/ArkIT5.txt [1] dupesarkit/ArkIT1.txt Set 2 of 2, preserve files [1 - 12, all]: Set 2 of 2, preserve files [1 - 12, all]: all [+] dupesarkit/ArkIT1.txt

- -N – Along with -d option if you use -N option it will not ask you for confirmation it will preserve first 2 files and delete all files.

- -I – Delete duplicates as they are encountered, without grouping into sets.

- -p – Don’t consider files as duplicates if they are owned by other than current user/group.

- -o=WORD – Sort the Files with given word

- -i – Use reverse method of sorting

- -v – Display Installed fdupes version

# fdupes -v fdupes 1.6.1

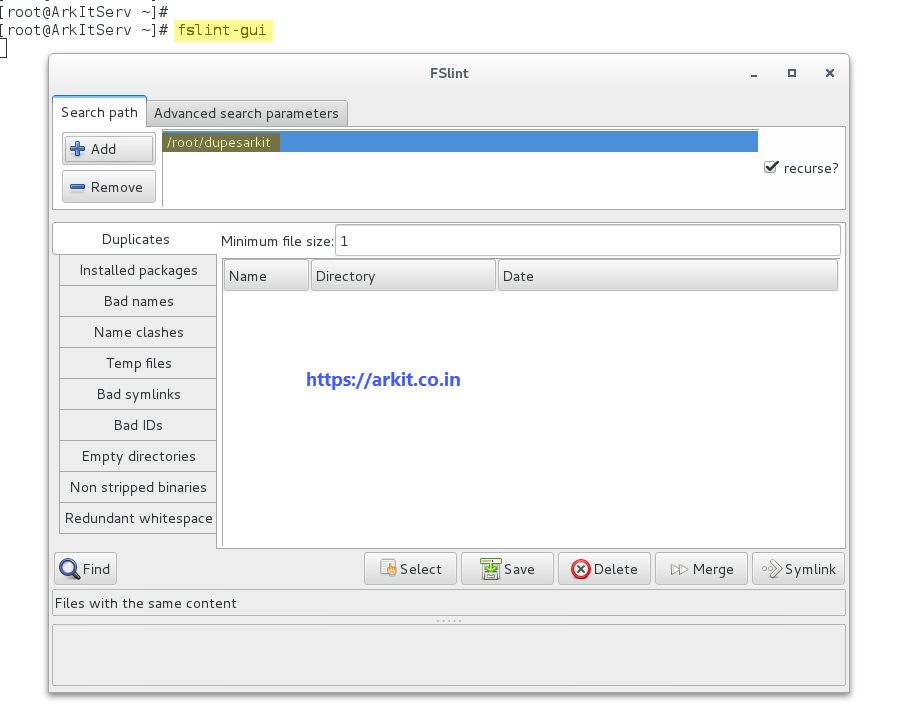

FSlint Utility to Clean Problematic Files (Duplicates)

FSlint is an GUI wrapper and toolset to find various problem with file systems, including find duplicate files. Download FSlint from git (clone) then build as RPM and install using rpm command.

# git clone https://github.com/pixelb/fslint.git fslint-2.47 # tar -czf fslint-2.47.tar.gz --exclude=.git fslint-2.47 # rpmbuild -ta fslint-2.47.tar.gz warning: bogus date in %changelog: Thu Dec 16 2002 Pádraig Brady Executing(%prep): /bin/sh -e /var/tmp/rpm-tmp.jJDK71 # rpm -Uvh rpmbuild/RPMS/noarch/fslint-2.47-1.noarch.rpm Preparing... ################################# [100%] Updating / installing... 1:fslint-2.47-1 ################################# [100%]

Findup Perl Script to Find Duplicates

You can use GUI window to determine problems or duplicates. Or you can also use findup perl script which is part of FSlint tool set.

[root@ArkItServ ~]# /usr/share/fslint/fslint/findup /root/dupesarkit/ ARKit <-- Duplicate File FSLint10.txt <-- DuplicateFile

TestFslint <--Duplicate

- -m Find duplicate files and merge them using hard links

- -s Duplicate files will be replaced using soft links

- -d find and delete duplicates leave one as original

Examples to use findup

findup -m /dirctoryPath ## Merge findup / -user $(id -u arkit) ##Find particular user files findup / -size +100k ##Find duplicates using size

jdupes is an 7 times faster to find duplicates and delete them

Original solution is fdupes then why we have to use jdupes because of raw search is 7 times faster then fdupes and options are different than fdupes command. Comparing the files using sizes, partial and full file hashes and followed by byte-by-byte. Most of the options matches as like fdupes the only extra here is -I, -x, -z and -Z

- -I = isolate

- -x = specify file size

- -z = zero match

- -Z = soft abort

# git clone https://github.com/jbruchon/jdupes.git # cd jdupes # make # make install

Examples:

jdupes -O dir1 dir3 dir2

Amazing Tools Find Duplicate Files And Remove Them In Linux

dupedit – Compares many files at once without checksumming. Avoids comparing files against themselves when multiple paths point to the same file. Program mainly used in SUSE Linux. Even you can also use with RHEL7/Centos7 by installing RPM files.

Linux 64 Bit Find Duplicate Files using dupedit

# wget http://download.opensuse.org/repositories/home:/adra/openSUSE_Tumbleweed/x86_64/dupedit-5.5.1-1.25.x86_64.rpm

32 Bit Download Link

#wget http://download.opensuse.org/repositories/home:/adra/openSUSE_Tumbleweed/i586/dupedit-5.5.1-1.25.i586.rpm

]# dupedit dupesarkit/ -- #0 -- 50 B -- 0.0 dupesarkit/TestFslint 0.1 dupesarkit/ARKit 0.2 dupesarkit/FSLint10.txt In total: 3 duplicate files containing 100 bytes duplicate data

Find More details about dupedit program

- dupmerge – Perl with algorithm optimized to reduce reads.

- liten2 – A rewrite of the original Listen, still a command line tool but with a faster interactive mode using SHA-1 checksums

- rdfind – One of the few which rank duplicates based on the order of input parameters in order not to delete in “original/well known” sources. Uses MD5 or SHA1.

- rmlint – Fast finder with command line interface and many options to find other lint too using MD5 hashes.

- ua – Unix/Linux command line tool, designed to work with find command.

- findrepe – Free Java-based command-line tool designed for an efficient search of duplicate files, it can search within zips and jars.

- fdupe – A small script written in Perl. Doing its job fast and efficiently

- ssdeep – identify almost identical files using Context Triggered Piece-wise Hashing

Related Articles

Create and View Your Own Interactive Cheat Sheets

How to set Default Printer in Linux using Command Line

Virsh Command Line Utility to manage Virtual Machines

Thanks for Your Wonderful Support and Encouragement

More than 40,000 techies are part of our ARKIT community. Join us today and keep learning Linux, Cloud, Storage, DevOps, and IT technologies.